ChatGPT leaks bits of users’ chat history

New gadgets and software come with new bugs, especially if they’re rushed. We can see this very clearly in the race between tech giants to push large language models (LLMs) like ChatGPT and its competitors out the door. In the most recently revealed LLM bug, ChatGPT allowed some users to see the titles of other users’ conversations.

LLMs are huge deep-neural-networks, which are trained on the input of billions of pages of written material.

In the words of ChatGPT itself:

“The training process involves exposing the model to vast amounts of text data, such as books, articles, and websites. During training, the model adjusts its internal parameters to minimize the difference between the text it generates and the text in the training data. This allows the model to learn patterns and relationships in language, and to generate new text that is similar in style and content to the text it was trained on.”

We have written before about tricking LLMs in to behaving in ways they aren’t supposed to. We call that jailbreaking. And I’d say that’s fine. It’s all part of what could be seen as a beta-testing phase for these complex new tools. And as long as we report the ways in which we are able to exceed the limitations of the model and give the developers a chance to tighten things up, we’re working together to make the models better.

But, when a model spills information about other users we stumble into an area that should have been sealed off already.

To understand better what has happened, it is necessary to have some basic working knowledge about how these models work. To improve the quality of the responses they get, users can organize the conversations they have with the LLM into a type of thread, so that the model, and the user, can look back and see what ground they have covered and what they are working on.

With ChatGPT, each conversation with the chatbot is stored in the user’s chat history bar where it can be revisited later. This gives the user an opportunity to work on several subjects and keep them organized and separate.

Showing this history to other users would, at the very least, be annoying and unacceptable, because it could be embarrassing or even give away sensitive information.

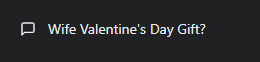

Nevertheless, this is exactly what happened. At some point, users started noticing items in their history that weren’t their own.

Although OpenAI reassured users that others could not access the actual chats, users were understandably worried about their privacy.

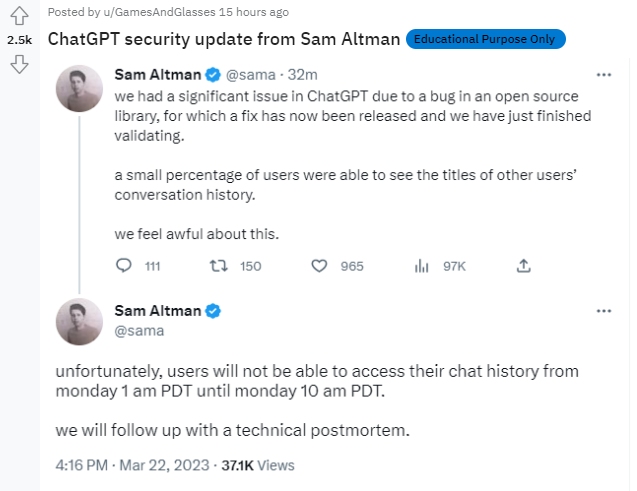

According to an OpenAI spokesperson on Reddit the underlying bug was in an open source library.

OpenAI CEO Sam Altman said the company feels “awful”, but the “significant” error has now been fixed.

Things to remember

Giant, interactive LLMs like ChatGPT are still in the early stages of development and, despite what some want us to believe, they are neither the answer to everything nor the end of the world. At this point they are just very limited search engines that rephrase what they found about the subject you asked about, unlike an “old-fashioned” search engine that shows you possible sources of information and you can decide which ones are trustworthy and which ones aren’t.

When you are using any of the LLMs, remind yourself that they are still very much in a testing phase. Which means:

- Do not feed it private or sensitive information about yourself or your employer. Other leaks are likely and may be even more embarrassing.

- Take the results with more than just a grain of salt. Because the models don’t provide sources of information, you can’t know where it’s ideas came from.

- Make yourself familiar with the LLM’s limitations. It helps to understand how up to date the information it uses is and the subjects it can’t converse freely about.

ThreatDown removes all remnants of ransomware and prevents you from getting reinfected. Want to learn more about how we can help protect your business? Get a free trial below.