You may or may be familiar with the furore over Deepfakes, a relatively new development in pornography involving a tool called FacesApp, which is capable of producing a real porn clip that replaces the original actors’ heads with those of celebrities—or indeed, anyone at all.

Online fakes have been around since the early 2000s or possibly even earlier; alongside those old photos, fakers would also make the odd terrible porno flick. Those movies would quite literally be a static cut out of a celebrity’s head stuck onto the body. Some 20 years later, the tech has caught up, and the web is suddenly dealing with the fallout.

FacesApp allows people to “train” an AI to create a realistic head so the scene is practically indistinguishable from reality. The AI is trained by feeding it images or footage of people; the more data it has to go off, the more realistic everything is.

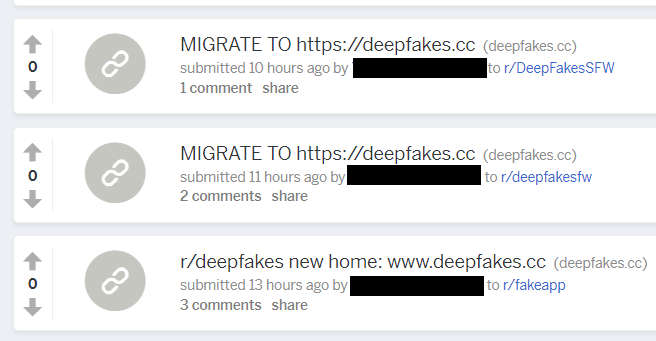

After a media firestorm, the inevitable has happened. All of the Deepfake subreddits, where the majority of content was being created, have been taken offline after major players such as Twitter and PornHub had already effectively banned Deepfake content from their networks.

The Deepfake tech is available for pretty much anyone to make use of—the only real barrier to entry is having a powerful PC capable of withstanding the intensive training process, which can take hours or days to complete.

Now, if you were a crafty cybercriminal and knew that the main Deepfakes sources were taken offline, with a sizable community of content consumers and creators with heavy-duty PC rigs suddenly set adrift, what would you do?

The answer, of course, is monetize potentially dubious fakes that you didn’t create yourself and hammer visitor’s PCs with mining scripts.

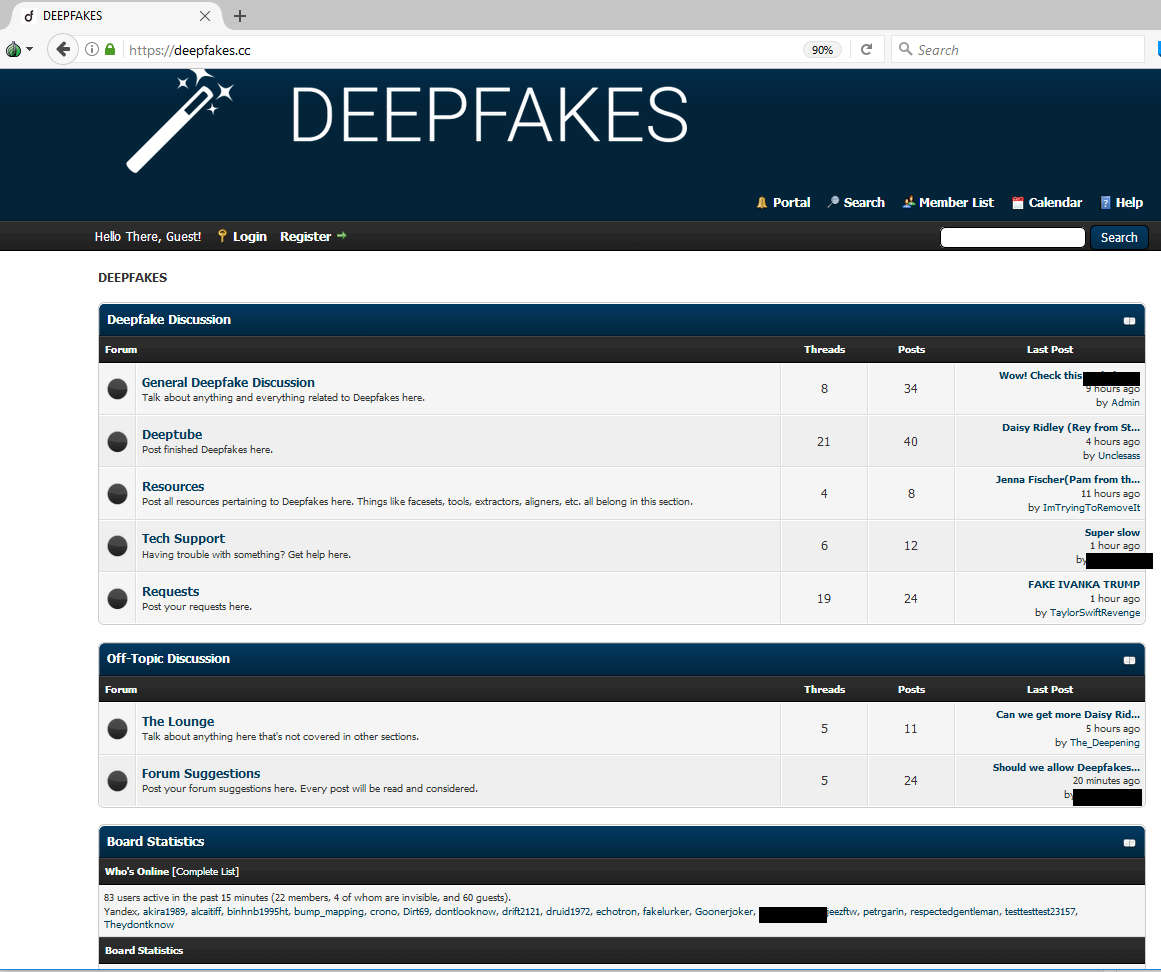

One of the most popular “lifeboat” sites we’ve seen for those unceremoniously dumped from the tender embrace of reddit was being promoted pretty heavily on surviving subreddits:

Click to enlarge

On the surface, it looks like a fairly typical forum, and it’s been getting a fair bit of activity so far. It all looks legit—or at least as legit as can be given the controversial content on offer:

Click to enlarge

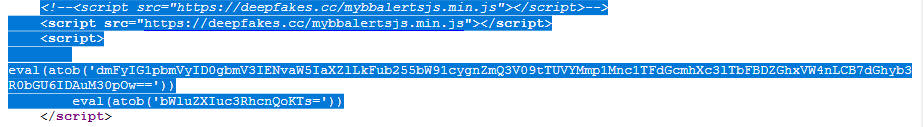

A quick check of the source code, while your CPU likely ramps up to 100 percent, would tell a slightly different story:

Click to enlarge

We have some Javascript located at:

/mybbalertsjs(dot)min(dot)js

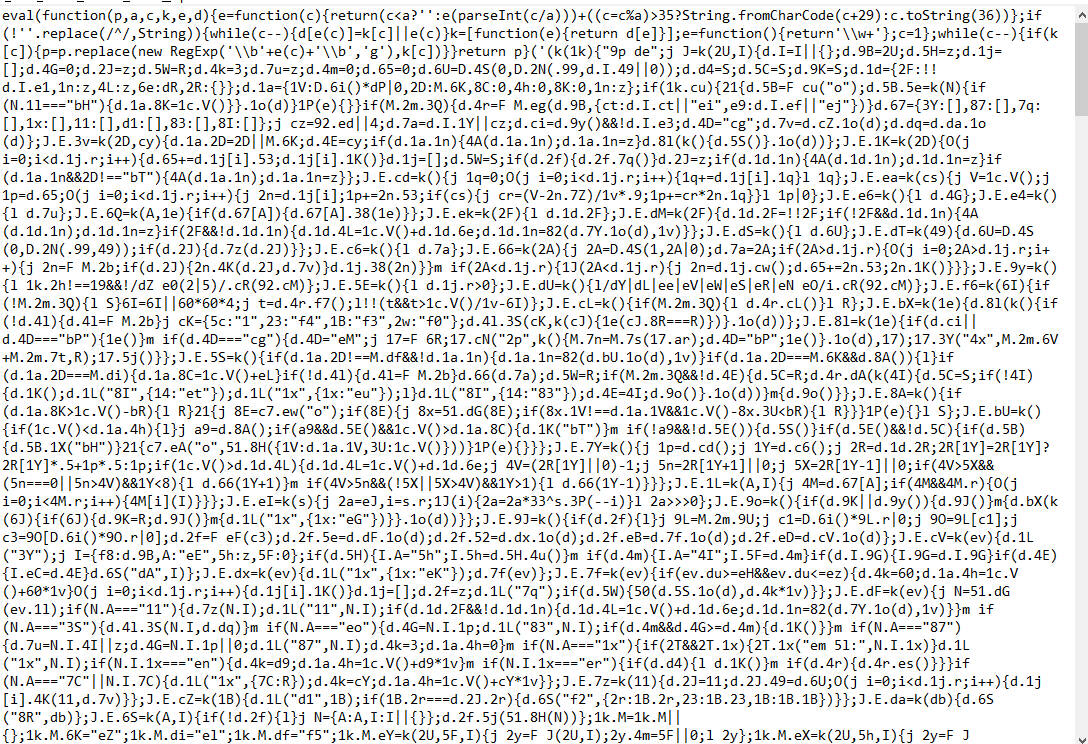

Click to enlarge

Sure, you could try to make sense of it as is. Or, you could just unpack it instead and save yourself a headache because that is a large, confusing pile of code. What is it doing?

var Miner=function

…miner…function? Did this site place mining scripts in the background?

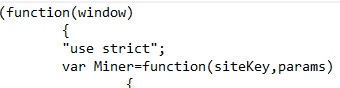

Click to enlarge

self.CoinHive.CONFIG=

They sure did, and we block both the mining and the website in question.

Click to enlarge

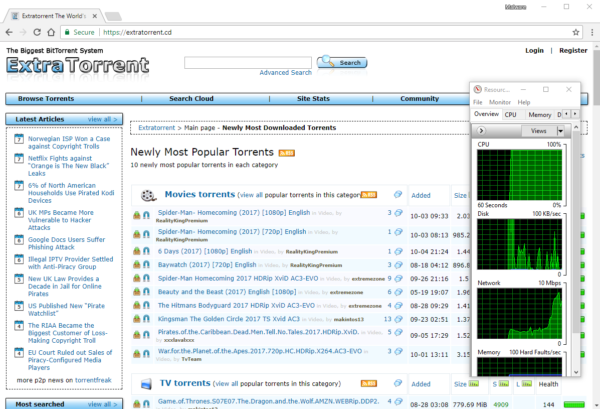

Coinhive is something we’ve been blocking since October. It allows you to place cryptocurrency mining scripts on your webpage, similar to how regular adverts are placed, except it’ll try to make as much use of your machine as possible to whip up some Monero coins for the site owner. Here’s an example of a site pushing a PC to the limit via mining scripts in the background. Check out the resources being gobbled up on the right-hand side:

Click to enlarge

In an age of people leaving dozens of tabs open and going for dinner, websites running scripts that ramp you up to 100 percent CPU usage and generate a fair bit of heat in the bargain just aren’t my thing. Now that we have DIY fake porn tech which demands high system specs and also has people simultaneously making content as well as downloading it, they’re prime targets for a spot of potentially surreptitious cryptomining taking place behind the scenes.

We’ve seen a few mentions of other Deepfake aficionados complaining about dodgy sites, and we’ll be taking a closer look to see what’s out there. All in all, you’re probably better off steering clear of the whole mess and taking up a less stress-inducing hobby (for you and your computer).

Keep your security tools up to date, make informed decisions about what you want to block, and keep those CPU temperatures down to a minimum!