When content creators flag one of their own videos as inappropriate for children, we expect YouTube’s AI moderator to accept this and move on. But the video streaming bot doesn’t seem to get it. Not only can it prevent creators from correcting a miscategorization, its synthetic will is also final—no questions asked—unless the content creator appeals.

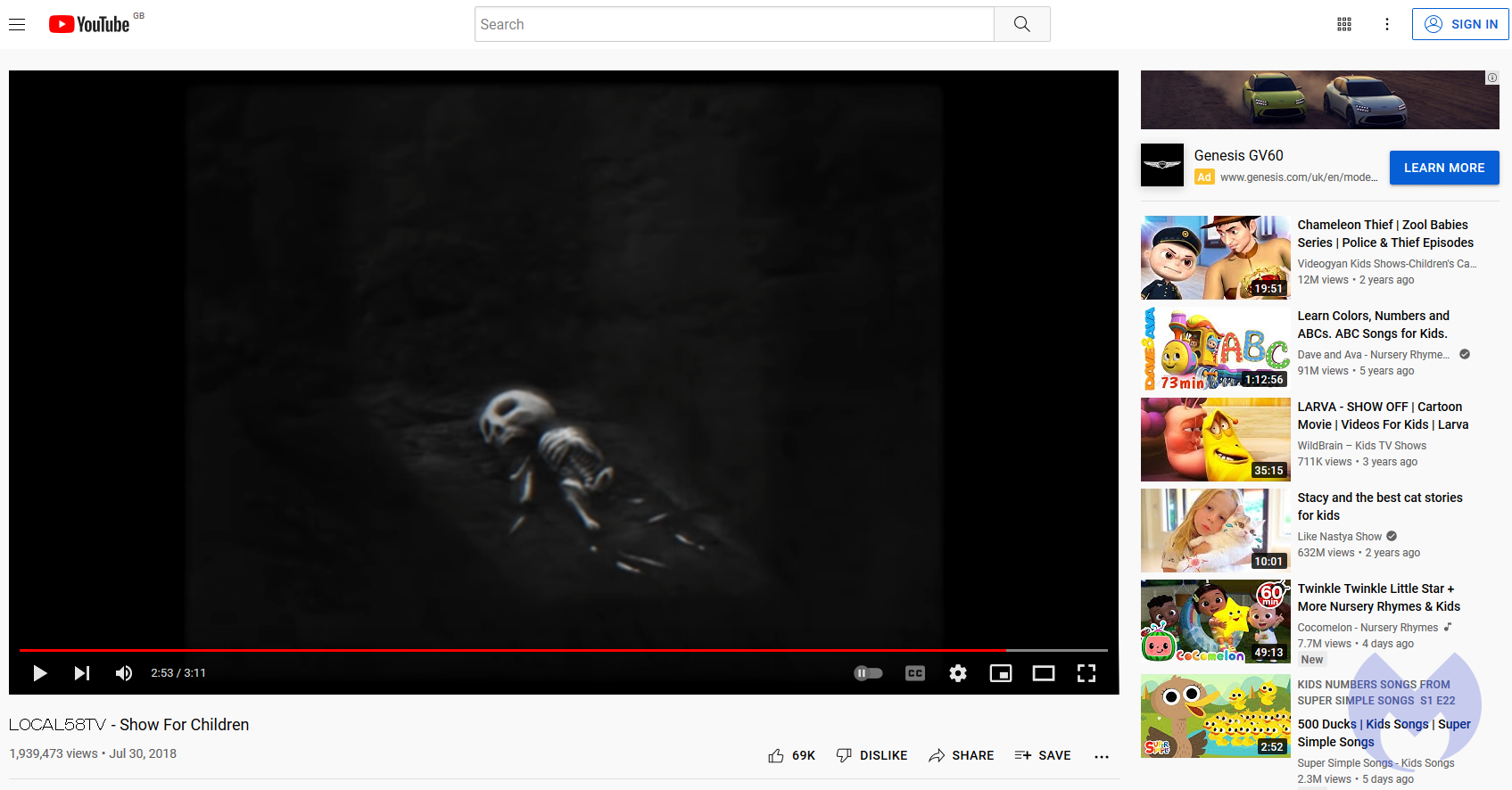

This is precisely what happened to Kris Straub, creator of the horror series Local58TV on YouTube. When he checked his account over the weekend, he spotted YouTube’s AI had erroneously marked his 3-minute video, “Show For Children”, as “Made for kids” under its “Policy reason”.

Per YouTube, “Made for kids” means:

This content has been set as made for kids to help you comply with the Children’s Online Privacy Protection Act (COPPA) and/or other applicable laws.

Features like personalized ads and comments are disabled on videos set as made for kids.

Videos that are set as made for kids are more likely to be recommended alongside other kids’ videos.

And YouTube did make it appear along with other child-friendly videos:

Straub didn’t think twice about taking to Twitter to air his disbelief:

Because the video is falsely marked as safe for childred, it could even end up in the “YouTube Kids” app, a separate video service that shows only filtered video clips made for kids from YouTube.

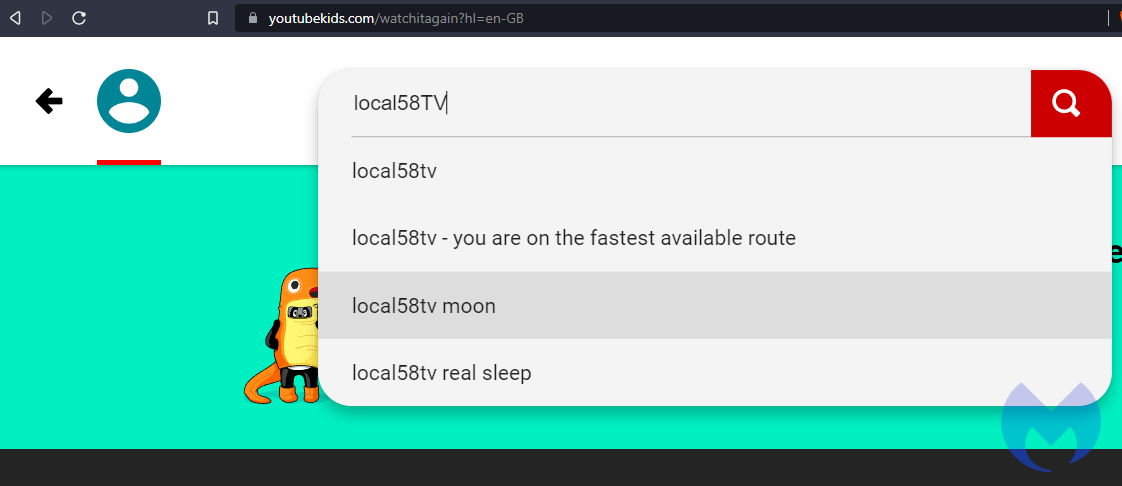

Thankfully, “Show For Children” didn’t appear in YouTube Kids search results when I tested. It’s interesting to note, however, that when I do a search of “Local58TV”, the site shows me pre-filled suggestions, as you can see below:

Fortunately, YouTube already got back to Straub and resolved the matter. The company also allowed him to mark his video as “not made for kids” when this feature was previously greyed out.

Staud left a question the YouTube team has yet to reply to.

I think we already know the answer to that.