Seven months after ChatGPT burst into our lives, it seems the lustre of the chatbot-that’s-going-to-change-everything is starting to fade. A new survey by Malwarebytes exposes deep reservations about ChatGPT, with optimism in startlingly short supply. Of the respondents familiar with ChatGPT:

- 81% were concerned about possible security and safety risks.

- 63% don’t trust the information it produces.

- 51% would like to see work on it paused so regulations can catch up.

The concerns expressed in the survey mirror the trajectory of the news about ChatGPT since its introduction in November 2022.

ChatGPT is a type of Artificial Intelligence (AI) developed by the organization OpenAI. Trained on vast amounts of text data, it can generate human-like responses to prompts or questions. And just in case you don’t know what that means, you should know that the last sentence you read was written by ChatGPT as part of a response to a request to “Describe ChatGPT in a single paragraph.” This is the whole text it generated:

ChatGPT is a powerful language model developed by OpenAI, based on the GPT-3.5 architecture. Trained on vast amounts of text data, it can generate human-like responses to prompts or questions. With its deep learning capabilities, ChatGPT understands context and produces coherent and contextually relevant text. It can assist with various tasks such as answering questions, providing explanations, and offering suggestions. Although it’s not a human and lacks real-time awareness, ChatGPT’s ability to generate text across multiple languages makes it a versatile tool, albeit one that should be cross-verified with reliable sources for accuracy.

When people explored its capabilities in the days and weeks after its launch, it seemed almost miraculous—a wonder tool that could do everything from creating computer programs and replacing search engines, to writing students’ essays and penning punk rock songs. Its release kick-started a race to disrupt everything with AI, and integrate ChatGPT-like interfaces into every conceivable tech product.

But those that know the hype cycle know that the Peak of Inflated Expectations is quickly followed by the Trough of Disillusionment. Predictably, ChatGPT’s rapid ascent was met by an equally rapid backlash as its shortcomings became apparent.

Chief among them is ChatGPT’s propensity to “hallucinate”, the euphemism that data scientists give to untruths created by machine learning models. Perhaps the best example of just how consequential hallucinations can be is Mata v. Avianca, Inc, a court case in which a lawyer found himself in serious hot water after citing numerous non-existent legal cases hallucinated by ChatGPT when he used it as a research tool.

Against that backdrop, Malwarebytes decided to poll its vast pool of newsletter subscribers to see how they felt about ChatGPT, six months after its launch.

Despite all the hype and hooplah surrounding it, only 35% of our tech-savvy respondents agreed with the statement “I am familiar with ChatGPT,” significantly less than the 50% that disagreed.

Those who claimed to be familiar with ChatGPT did not have a rosy outlook. This is what they told us.

Not accurate or trustworthy

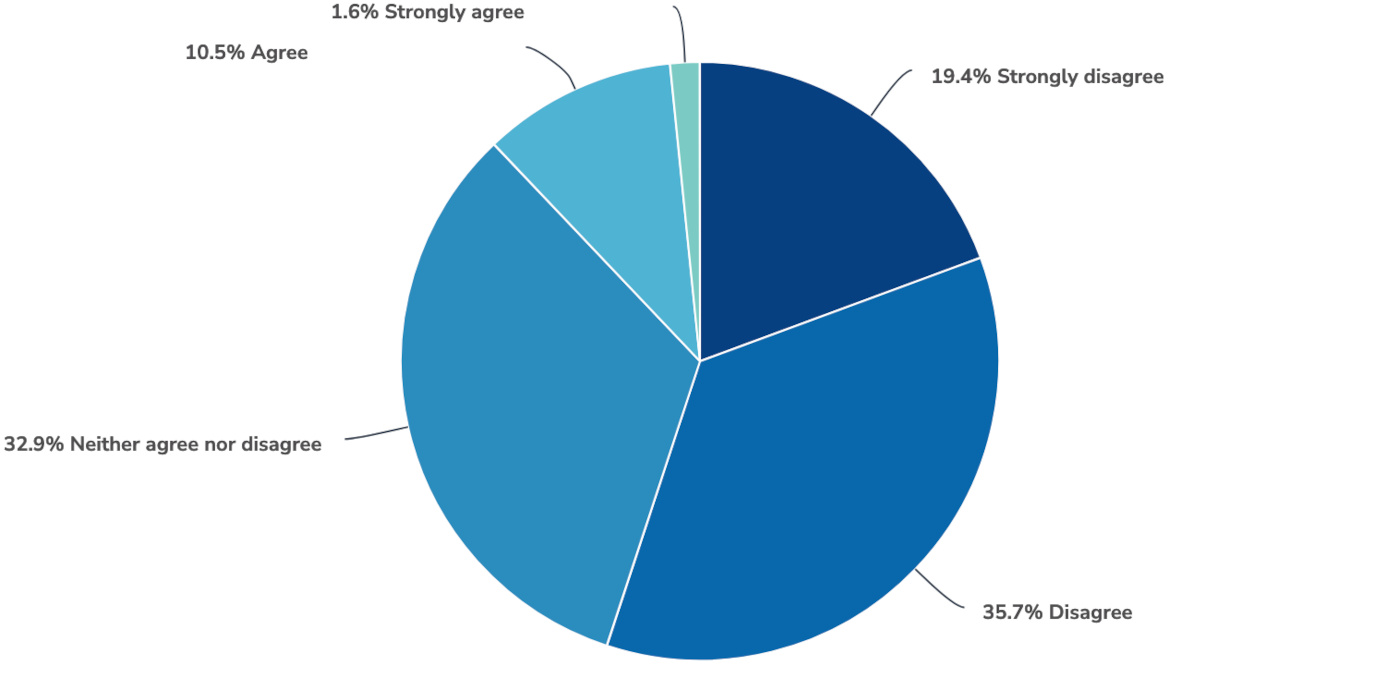

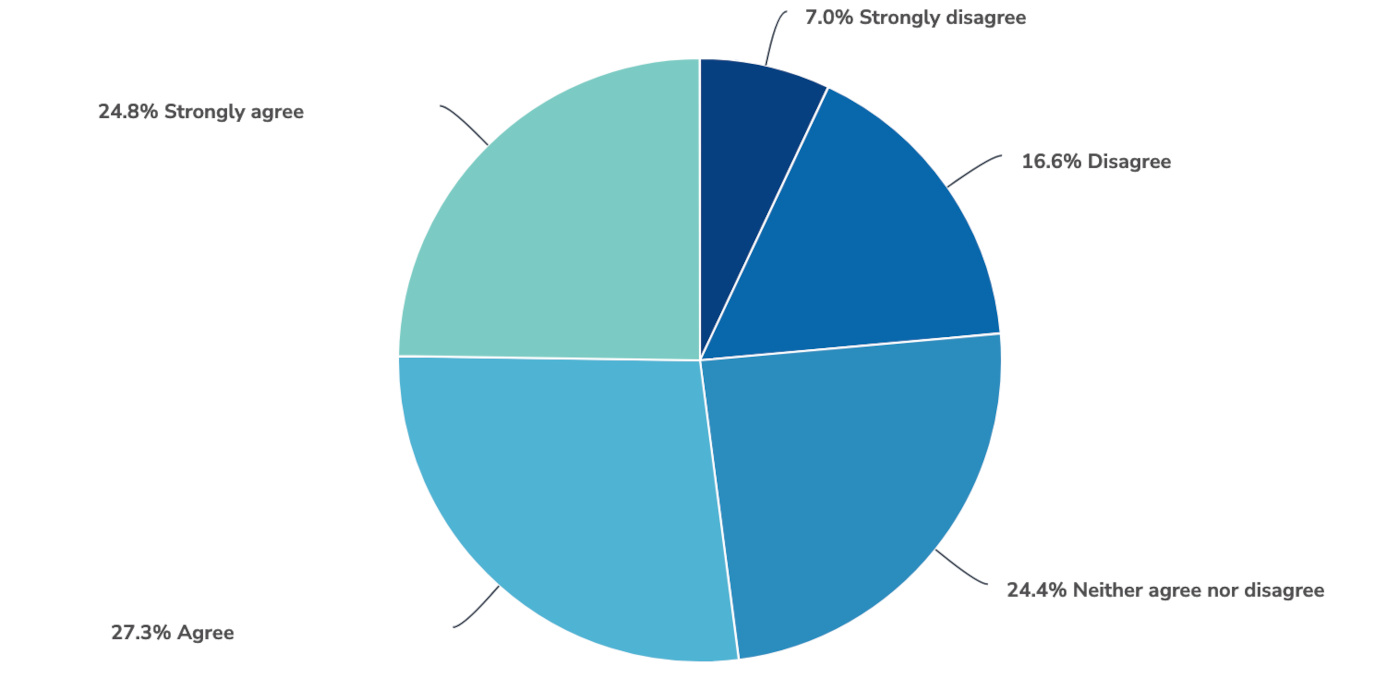

The first issue for ChatGPT is that our respondents don’t trust that it’s accurate or trustworthy. Only 12% agreed with the statement “The information produced by ChatGPT is accurate,” while 55% disagreed, a huge discrepancy.

The responses were similarly bleak for the statement “I trust the information produced by ChatGPT,” with only 10% agreeing and a huge 63% disagreeing.

A risk to security and safety

Not only was ChatGPT seen as untrustworthy, it was also perceived as a negative influence on safety and security, with few seeing it as a tool that will improve safety, and an overwhelming majority seeing it as a source of risk.

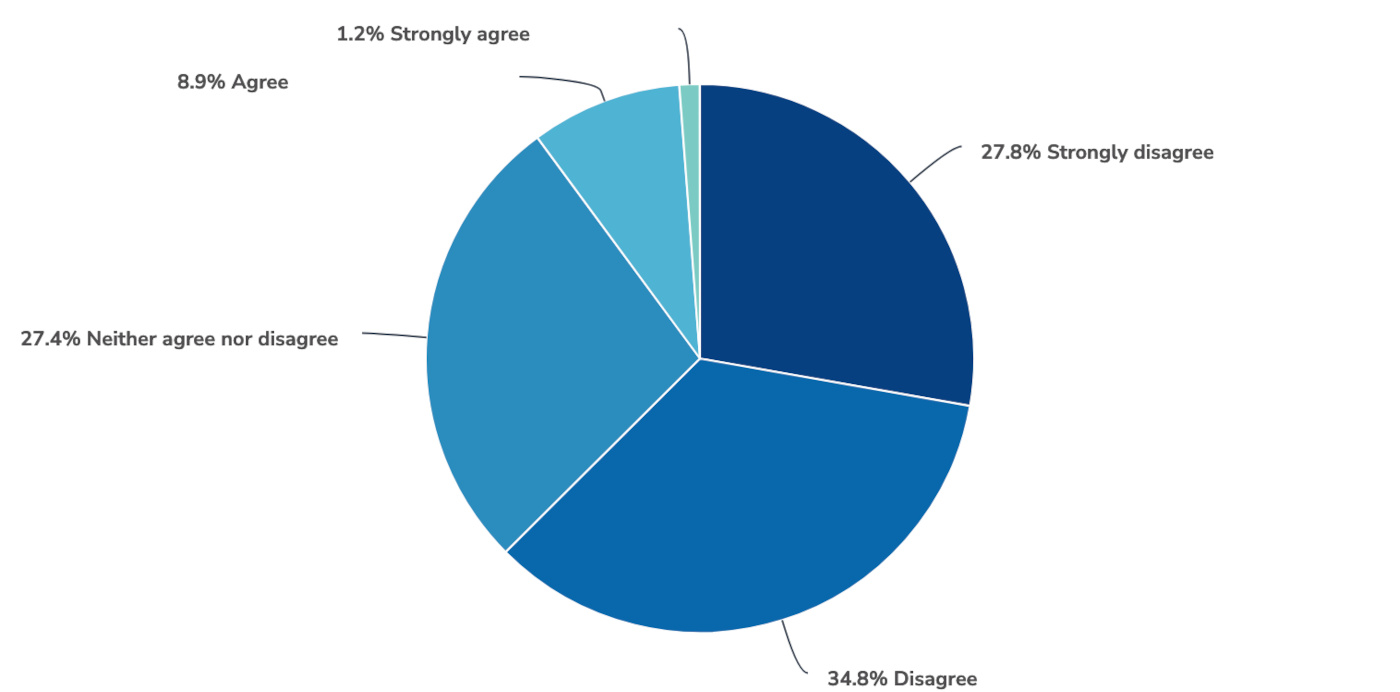

51% disagreed with the statement “ChatGPT and other AI tools will improve Internet safety,” dwarfing the tiny percentage that see it as a positive for safety.

Worse still, an extraordinary 81% were concerned about the possible security and/or safety risks.

They aren’t alone. In March a raft of tech luminaries signed a letter that said “We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.” The letter pulled no punches on the “profound risks” posed by “AI systems with human-competitive intelligence”:

Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization?

The letter calls for the pause to be used to “jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts.”

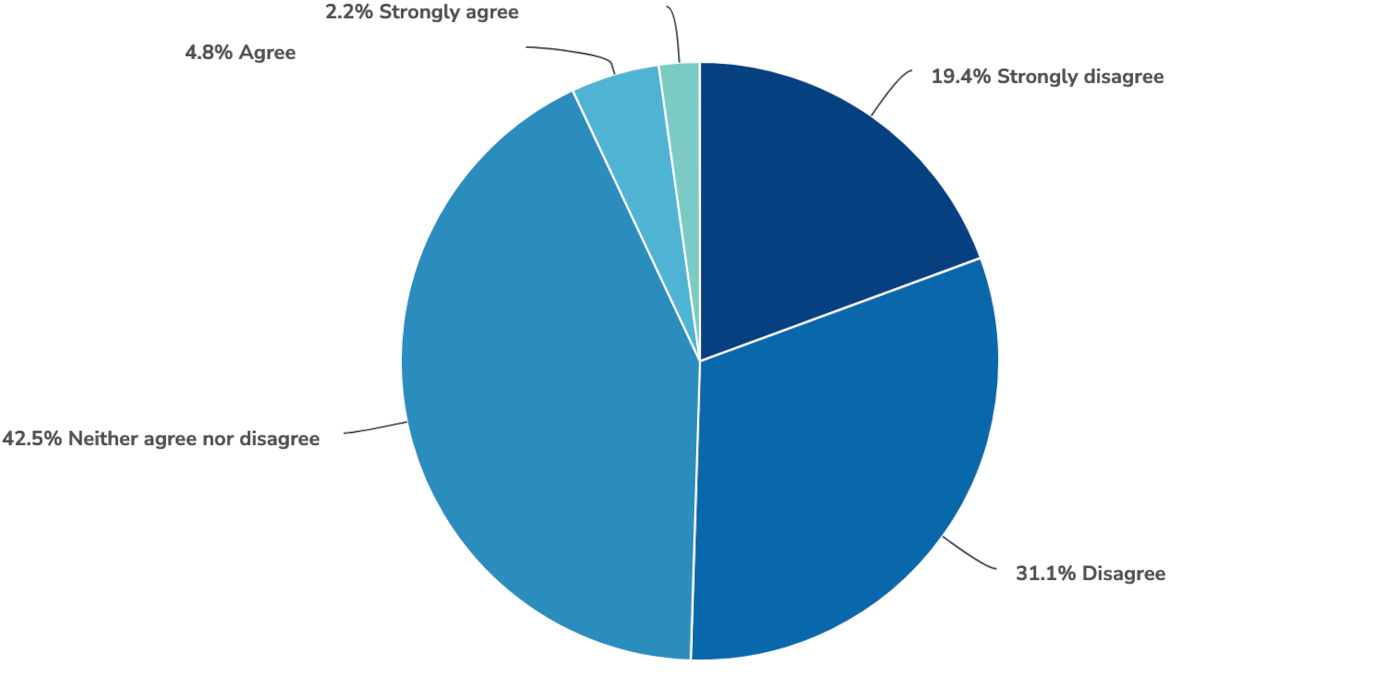

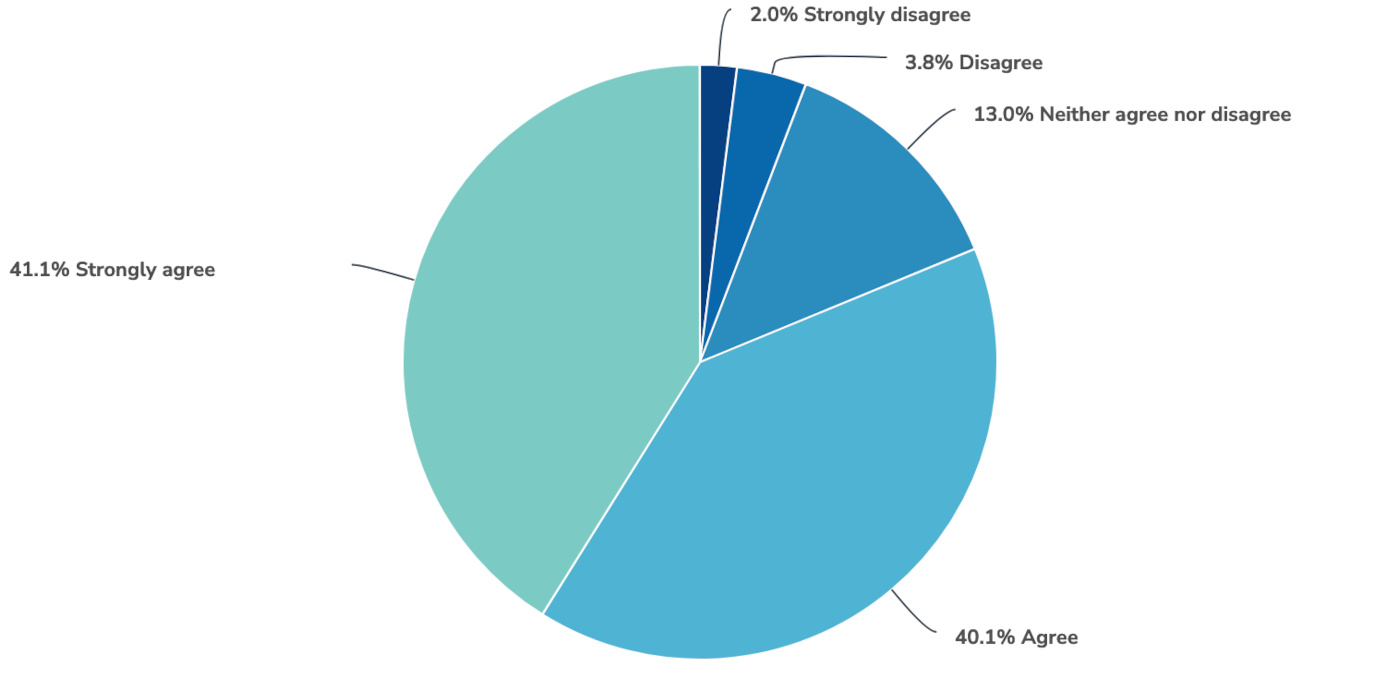

We put the idea to our respondents and 52% of those familiar with ChatGPT agreed, while less than half that number disagreed.

Conclusion

Our survey showed that an overwhelming number of respondents familiar with ChatGPT were concerned about the risks it poses to security and safety. They also don’t trust the information it produces, and would like to see a pause in development so that regulation can catch up. What remains to be seen is whether this is simply a singular moment of anxiety or a trend that will persist.

An AI revolution has been gathering pace for a very long time, and many specific, narrow applications have been enormously successful without stirring this kind of mistrust. For example, at Malwarebytes, Machine Learning and AI have been used for years to help improve efficiency, to identify malware, and improve the overall performance of many technologies.

ChatGPT is a different beast though. It is a generalized AI tool that could help or supplant humans across a broad range of knowledge work, from coding and composing songs to making malware and spreading misinformation.

The uncertainty around how ChatGPT will change our lives, and whether it will take our jobs, is compounded by the mysterious way in which it works. It is an unknown quantity to everyone, even its creators. Machine learning models like ChatGPT are “black boxes” with emergent properties that appear suddenly and unexpectedly as the amount of computing power used to create them increases.

Real world emergent properties have included the ability to perform arithmetic, take college-level exams, and identify the intended meaning of words. The ability to perform these tasks could not be predicted from smaller models, and today’s models cannot be used to predict what the next generation of larger models will be capable of.

That leaves us facing a very uncertain future, both individually and collectively. The continuum of view points held by serious commentators ranges—quite literally—from those who think AI is an existential risk to those who think it will save the world. Given the stakes, the caution of our respondents is no surprise.

We don’t just report on threats—we remove them

Cybersecurity risks should never spread beyond a headline. Keep threats off your devices by downloading Malwarebytes today.